Loading...

Blog

Insights on voice AI, emotional intelligence, and the future of customer research.

Research & Insights

5 Free Tools to Run Better Customer Research, Starting Today

Five free tools from ReadingMinds to write better invites, remove question bias, calculate sample sizes, pick research methods, and score survey questions.

Stu Sjouwerman•April 3, 2026

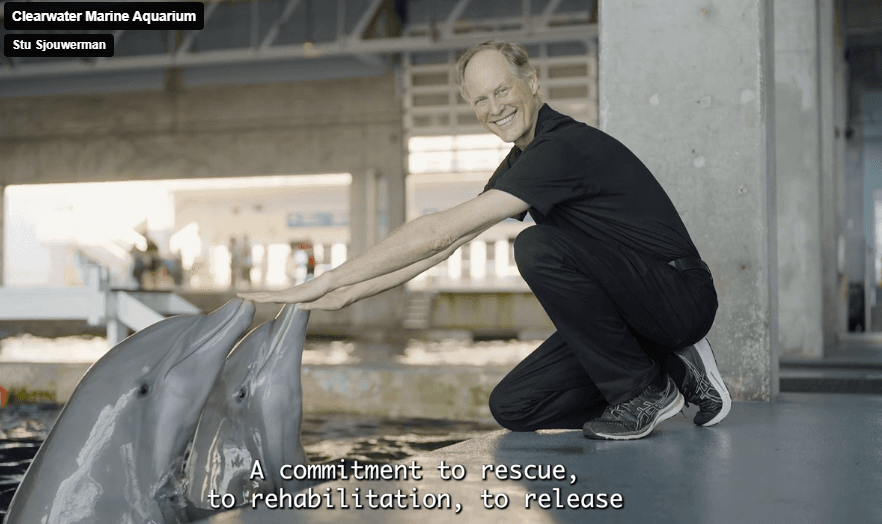

Product & Company

ReadingMinds Supports Clearwater Marine Aquarium: Rescue, Rehab, Return

ReadingMinds is proud to support Clearwater Marine Aquarium and their mission to rescue, rehabilitate, and return marine life.

Stu Sjouwerman•April 1, 2026

Research & Insights

Thank You to Insight Platforms and Their Work for the Insights Community

A thank you to Insight Platforms for their Corporate Researcher’s Guide series and independent educational work for insights teams.

Stu Sjouwerman•March 31, 2026

Research & Insights

After Your First 20 Interviews: What You Will Actually Change in 30 Days

What smart teams change after 20 AI voice interviews: messaging, demo talk tracks, onboarding friction, and renewal risk signals.

Stu Sjouwerman•March 30, 2026

Voice AI

How We Classify 6 Emotions from Voice: The Technical Architecture Behind the Emotional Fingerprint

How ReadingMinds turns voice into 6 emotions with 1-9 intensity scoring using a two-layer architecture. Download the technical backgrounder.

Stu Sjouwerman•March 30, 2026

AI Strategy

Why AI Agents Need a Model-Agnostic Emotional Evidence Layer Before They Act

AI agents are going operational, but acting without customer evidence is a liability. Here is why model-agnostic emotional evidence is the missing layer.

Stu Sjouwerman•March 27, 2026

AI Strategy

65% of CMOs Expect AI to Reshape Marketing, but Only 6% Have Real Workflows

Gartner says 65% of CMOs expect AI to reshape their role, yet only 6% have embedded it into workflows. The gap is not technology; it is signal quality.

Stu Sjouwerman•March 26, 2026

AI Strategy

From Action to Judgment: Why Decision Quality Is the Next AI Bottleneck

AI agents can act, but they fail without decision signals. The next phase of AI is not faster action; it is better judgment.

Stu Sjouwerman•March 25, 2026

AI Strategy

Insights Don't Drive Outcomes. Signals Do.

Dashboards and reports don't change outcomes. AI agents need structured, machine-readable emotional signals to make real decisions. The future of customer intelligence is not better summaries; it is emotional decision signals for AI agents.

Stu Sjouwerman•March 23, 2026

AI Strategy

The Next Layer of AI: Why Emotional Signals Will Power Agent Workflows

AI agents can execute tasks, but they still lack reliable insight into human behavior. The next wave of AI infrastructure will be defined by emotional signal: the missing input that determines whether agents make good decisions or bad ones.

Stu Sjouwerman•March 23, 2026

AI Strategy

The Next AI Breakthrough Isn't Smarter Agents. It's Better Judgment.

Everyone is talking about AI agents. But the next major breakthrough will not come from agents that do more things. It will come from agents that make better decisions before they act. That requires emotional signal infrastructure.

Stu Sjouwerman•March 21, 2026

Research & Insights

What Smart Teams Do Differently After Just 20 Customer Interviews

Most teams do not have a research problem. They have a speed-to-action problem. Here is what happens when you run 20 focused voice interviews, structure the signals, and push them straight into your workflows.

Stu Sjouwerman•March 20, 2026

AI Strategy

The New Growth Lever: Catching Churn Before It Starts (And How to Catch It Early)

Retention is quietly becoming the most important growth lever. Companies that operationalize AI-driven signals like engagement drops, sentiment shifts, and behavioral anomalies are seeing double-digit improvements in retention.

Stu Sjouwerman•March 20, 2026

AI Strategy

The 5-Step Agentic Marketing Blueprint: How to Build Campaigns That Learn and Adapt

Single-point AI automation is not agentic AI. This Forbes Technology Council piece lays out a practical 5-step blueprint for building marketing campaigns that input signals, make decisions, act, and learn from the result.

Stu Sjouwerman•March 19, 2026

Voice AI

Why People Don't Trust Bad AI Voices: What 10,000 Listeners Reveal About Synthetic Speech

A global study of 20 AI voice models and 10,000 listeners shows that trust collapses the moment a voice sounds artificial. The winners in conversational AI will not be the biggest models; they will be the ones that sound genuinely human.

Stu Sjouwerman•March 16, 2026

AI Strategy

The Next Layer of AI: Why Agent Infrastructure Needs Customer Emotion

AI agents are the new operators of software. But most research tools still produce outputs designed for humans. The platforms that transform voice conversations into structured emotional signals will define the next generation of agent infrastructure.

Stu Sjouwerman•March 15, 2026

AI Strategy

Why AI Agents Need Signal Endpoints, and What That Means for Customer Insight

AI agents are becoming the new users of software. Platforms that expose high-value signals like emotion classification, intensity scoring, and churn risk as structured endpoints will become foundational inputs for AI decision systems.

Stu Sjouwerman•March 14, 2026

Product & Company

ReadingMinds MCP Server Is Now GA: Secure Customer Truth as a Tool Call, Not a Dashboard

The ReadingMinds MCP Server is generally available. Claude, GPT, or custom agents can now query customer truth with scoped permissions, structured evidence packs, and privacy-minimizing defaults.

Stu Sjouwerman•March 14, 2026

Research & Insights

Kantar Says Moving Creative from Average to Best Lifts ROI 30%: Here's the Layer They're Missing

Kantar's predictive creative evaluation shows that improving ad quality from average to best can lift ROI by 30% or more. But AI scores alone cannot explain why creative works. Customer voice fills that gap.

Stu Sjouwerman•March 14, 2026

AI Strategy

The AI Maturity Gap: Where Your Insights Stack Really Stands (And Why It Matters)

Every company says it's using AI. But the latest benchmarks reveal a striking gap between companies experimenting with AI and those generating real business impact. Here is how to tell where you actually stand.

Stu Sjouwerman•March 14, 2026

AI Strategy

Voice Is the New Interface for Human + AI: Why the Next Great UX Won't Feel Like Software

Software interfaces have always been recycled from older worlds. Filing cabinets became databases, paper forms became webpages. The next interface for AI is not a chat box. It is voice.

Stu Sjouwerman•March 14, 2026

AI Strategy

75% of Marketing Teams Use AI, But Campaigns Still Feel Generic: Here's the Gap

AI adoption is everywhere in marketing. Transformation is not. The gap between deploying AI tools and actually understanding customers is where most teams are stuck.

Stu Sjouwerman•March 12, 2026

Emotion & CRM

How to Detect Emotion Drift and Prevent Silent Churn Before Customers Leave

Most companies listen to what customers say. The real signal is how they say it. Learn how emotion drift in voice conversations reveals churn risk weeks before it shows up in dashboards.

Stu Sjouwerman•March 10, 2026

Emotion & CRM

Turning Emotion Analytics into Actionable Revenue: How Voice Signals Power a RevOps Dashboard

Most dashboards measure what customers do. A voice-driven RevOps dashboard measures what customers feel while they're deciding. Here are the five voice signals that change pipeline visibility.

Stu Sjouwerman•March 9, 2026

AI Strategy

ReadingMinds Emotional Fingerprint for Agentic Marketing: Voice-Native Emotional Intelligence for AI Agents

Most marketing data tells you what customers say. The ReadingMinds Emotional Fingerprint introduces voice-native emotional intelligence to agentic AI marketing, classifying six core emotions with intensity scoring from voice.

Stu Sjouwerman•March 9, 2026

Research & Insights

95% of Researchers Now Use AI: 4 Takeaways from the 2026 Qualtrics Market Research Trends Report

Qualtrics surveyed 3,000+ researchers across 17 countries. The findings are clear: AI in market research is no longer optional, and the gap between leaders and laggards is widening fast.

Stu Sjouwerman•March 9, 2026

Emotion & CRM

6 Voice Signals That Predict Churn Before Sentiment Scores Do

Negative sentiment is a lagging indicator. The real early warning lives in micro-pauses, deflections, and prosody shifts that surface weeks before NPS drops. Here are the six voice signals to track.

Stu Sjouwerman•March 5, 2026

Research & Insights

The Soft No: Tracking Hesitation as Subtext

The 'soft no' rarely arrives as a clear rejection. It shows up first as hesitation: pauses, filler words, hedges. These signals reveal doubts and churn risk long before a customer explicitly says no.

Stu Sjouwerman•March 4, 2026

Emotion & CRM

Avoidance as the Strongest Churn Signal

The earliest churn signal is not anger or declining NPS. It is quiet avoidance: customers gradually stop engaging with the core workflow while still technically active. Here is how to detect it early.

Stu Sjouwerman•March 4, 2026

AI Strategy

$25.3B Evaporates Every Year: ~5.3% of the English-Speaking Software Market Lost to Churn

Using GDP-based country allocation, Gartner's global software totals, and SaaS churn benchmarks, we estimated annual gross revenue churn across major English-primary business markets. The result: roughly $25.3B lost every year.

Stu Sjouwerman•February 27, 2026

Research & Insights

Why Voice Unlocks What Surveys Miss

A new wave of research confirms that voice-led interviews produce richer emotional language and stronger recall than typed surveys. Here's why speaking changes everything for customer insight.

Stu Sjouwerman•February 26, 2026

Product & Company

Announcing the ReadingMinds Academy: Mastering Voice-First Customer Interviews

Today we're launching the ReadingMinds Academy: a practical training series to help marketing teams create high-impact voice interviews that produce sharper positioning, clearer messaging, and higher conversion.

Stu Sjouwerman•February 23, 2026

AI Strategy

Why Trust Must Be Engineered Into AI Products, Not Bolted On

In a world flooded with AI-generated content, cloned voices, and synthetic data, trust has quietly become the most valuable asset in marketing. Here's why it must be engineered into the product itself.

Stu Sjouwerman•February 22, 2026

AI Strategy

Generative + Predictive + Agentic: The Three-Layer AI Stack That Closes the Loop From Insight to Revenue

71% of marketers plan to deploy generative and predictive AI in the next 18 months. But the winners will add an agentic layer that acts, not just creates and forecasts.

Stu Sjouwerman•February 14, 2026

AI Strategy

Your Insights Dashboard Is About to Die: How to Become Agent-Ready (Before You're "Just Reports")

Customer insight is moving from dashboards people visit to tools agents call. That's not a small UX tweak. That's a category reset. Here's how to survive it.

Stu Sjouwerman•February 13, 2026

Voice AI

What "Voice-Verified" Should Really Mean

As AI gets better at talking, a hard question pops up fast: Who's actually on the other end of the conversation? Voice-verified can't just be a buzzword.

Stu Sjouwerman•February 8, 2026

Emotion & CRM

From Listening to Launching: Turning Customer Emotion Into Same-Day Revenue

Most companies are proud of how well they 'listen' to customers. Then nothing happens for weeks. Here's the operating loop that turns signal into spend into revenue in the same day.

Stu Sjouwerman•February 8, 2026

Emotion & CRM

Emotional Intelligence Wasn't the Next Layer. It Was the Missing One.

AI could hear us. It could answer us. But it couldn't understand us. That's now changing, and not all at once.

Stu Sjouwerman•January 28, 2026

Product & Company

We Hit the List! Why "Agent-Powered Growth" is the Playbook for 2026

Announcement of bestseller list debut at #17 on USA Today

Stu Sjouwerman•January 23, 2026

Voice AI

What is "Voice Pilling"?

To be "voice-pilled" means recognizing speech superiority for capturing true consumer intent at scale

Stu Sjouwerman•January 20, 2026

Research & Insights

Evaluative Research: Proving What Works (and What Doesn't)

How evaluative research validates specific solutions through rigorous testing combined with AI-driven insights

Stu Sjouwerman•January 20, 2026

Research & Insights

AI Market Research Analyst In-a-Box: Enterprise-Grade Insights for Mid-Market Teams

AI-powered "Analyst-in-a-Box" model enables mid-market teams to conduct hundreds of interviews simultaneously

Stu Sjouwerman•January 20, 2026

Research & Insights

Turning AI Interviews into Action: Research Ideas to Inspire Your Team

A seven-step framework for converting qualitative conversations into actionable business intelligence

Stu Sjouwerman•January 20, 2026

AI Strategy

2025 State of Marketing AI: Momentum Meets a Massive Skills Gap

Report reveals 74% of marketers view AI as critical, yet 62% face barriers due to lack of education

Stu Sjouwerman•January 20, 2026

Emotion & CRM

How to Turn Customer Emotions into Marketing Insights with Voice AI

Using voice-to-voice AI to generate deep marketing insights and probe customer sentiment at scale

Stu Sjouwerman•January 20, 2026

AI Strategy

AI in Marketing Has Hit $47B: Why the Shift From Optional to Strategic Is Accelerating

AI in marketing has shifted from optional to strategic; global market projected at $47 billion

Stu Sjouwerman•January 20, 2026

Product & Company

Self-Serve by Design: Why ReadingMinds Is a Complete No-Brainer

B2B buyers prefer self-service; ReadingMinds delivers voice-interview insights in hours

Stu Sjouwerman•January 20, 2026

Research & Insights

Why Market Research Is Getting More Human

Industry moves toward human-centric research using AI to scale empathy and quantify qualitative insights

Stu Sjouwerman•January 20, 2026

Emotion & CRM

How to Predict Churn Before It Happens: A Guide to Emotional Intelligence in Retention

Emotional intelligence helps identify at-risk customers before cancellation, turning dashboards into predictive tools

Stu Sjouwerman•January 20, 2026

Voice AI

Why Word Error Rate Alone Can't Tell You How Good Your Voice AI Really Is

While Word Error Rate (WER) is the industry standard for measuring AI voice accuracy, it fails to account for human emotion and prosody.

Stu Sjouwerman•January 20, 2026

Voice AI

The Voice Awakening: How AI Is Learning to Talk, and Listen, Like Us

Voice technology is crossing a threshold from passive tools to active AI agents capable of holding natural, expressive conversations.

Stu Sjouwerman•January 20, 2026

AI Strategy

What Microsoft's Latest Research Tells Us About the Future of AI-automated Jobs

Microsoft Research's 2025 study identifies 40 occupations most and least susceptible to AI and automation.

Stu Sjouwerman•January 20, 2026

AI Strategy

Accountable Acceleration: Why Generative AI is the New Benchmark for Mid-Market Leaders

Weekly generative AI usage jumped from 37% in 2023 to 82% in 2025, marking a shift toward bespoke solutions.

Stu Sjouwerman•January 20, 2026

Product & Company

10× Faster Insights: What One ReadingMinds Beta Participant Discovered After Letting AI Run Their Customer Interviews

AI interviews can deliver both the scale of a survey and the depth of a human conversation.

Stu Sjouwerman•January 20, 2026

Emotion & CRM

Stop Reporting Churn. Start Preventing It.

Traditional retention strategies often fail because they rely on lagging churn reports that identify issues only after a customer has decided to leave.

Stu Sjouwerman•January 20, 2026

Emotion & CRM

The Next Interface is No Interface At All: The Rise of Emotionally Intelligent AI

The true breakthrough is the removal of the interface altogether through emotionally intelligent AI systems.

Stu Sjouwerman•January 20, 2026

Product & Company

The Why-Layer: A Technical Deep Dive into ReadingMinds in the Modern Marketing Stack

How ReadingMinds generates emotional and causal signals to supplement data warehouses and CDPs.

Stu Sjouwerman•January 20, 2026

Research & Insights

GenAI Isn't Experimental Anymore: It's Becoming the Insights Engine

GenAI has transitioned from buzzword to research backbone, automating survey design and qualitative analysis.

Stu Sjouwerman•January 20, 2026

Emotion & CRM

How AI Runs Thousands of Emotionally Intelligent Interviews in Parallel

AI-powered voice assistants conducting thousands of parallel emotionally intelligent conversations, compressing research cycles from weeks to hours.

Stu Sjouwerman•January 20, 2026

Emotion & CRM

How Salesforce's Sentiment Insights Shows the Future of AI-Driven Customer Understanding

Customer subtext intelligence transforms qualitative cues into actionable data for predicting customer loyalty.

Stu Sjouwerman•January 20, 2026

Emotion & CRM

Why Emotion Data Will Become the Next Competitive Advantage in CRM

Emotion data, the measurable metadata of tone, anger, and enthusiasm, enables 30% faster churn prediction.

Stu Sjouwerman•January 20, 2026

AI Strategy

WSJ: Layoffs Expected as Marketers Face Pressure Over AI Savings

Nearly 50% of enterprise CMOs expect headcount reductions from AI adoption, but gap remains between investment and returns.

Stu Sjouwerman•January 20, 2026

Emotion & CRM

When Emotion Becomes Data: The Next Shift in Customer Intelligence

Emotional signals as structured data enable teams to predict churn and prioritize high-momentum leads.

Stu Sjouwerman•January 20, 2026

Research & Insights

When AI Becomes the Research Workflow, Not the Tool

Autonomous AI workflows allow researchers to focus on high-level judgment and strategy while delivering insights at 10× the traditional speed.

Stu Sjouwerman•January 20, 2026

Emotion & CRM

From Records to Relationships: Why the Next CRM Leap Is Emotional Intelligence

Moving beyond systems of record to capture real-time emotional signals including language, tone, and intent.

Stu Sjouwerman•January 20, 2026

Research & Insights

10× Faster Insights With Synthetic Data: How it Works

Synthetic data allows instant simulation of consumer responses, serving as research multiplier for high-stakes decisions.

Stu Sjouwerman•January 20, 2026

Product & Company

Introducing Agent-Powered Growth: The Playbook for AI-Driven Customer Strategy

A guidebook described as 'a must-read for business leaders ready to turn AI into real results.'

Stu Sjouwerman•January 13, 2026

Ready to hear what your customers really think?

Take a Live Test Drive and experience voice-based customer research.

Surveys tell you what customers say. Voice tells you what they mean. Find out in 3 minutes.